There are many different cubes like a MegaMinx a 2x2 a 4x4, pyraminx, skewb and even more. In cubing competitions there are many things you can do, there is blindfolded, feet, and even one hand solving. I feel that everyone can solve the cube, just look up a video there are thousands of tutorials of it. Everyone is very happy for you if you get a new personal best, and the time you need to go to one is like 5 mins so if u can solve it in under that you can go to one.

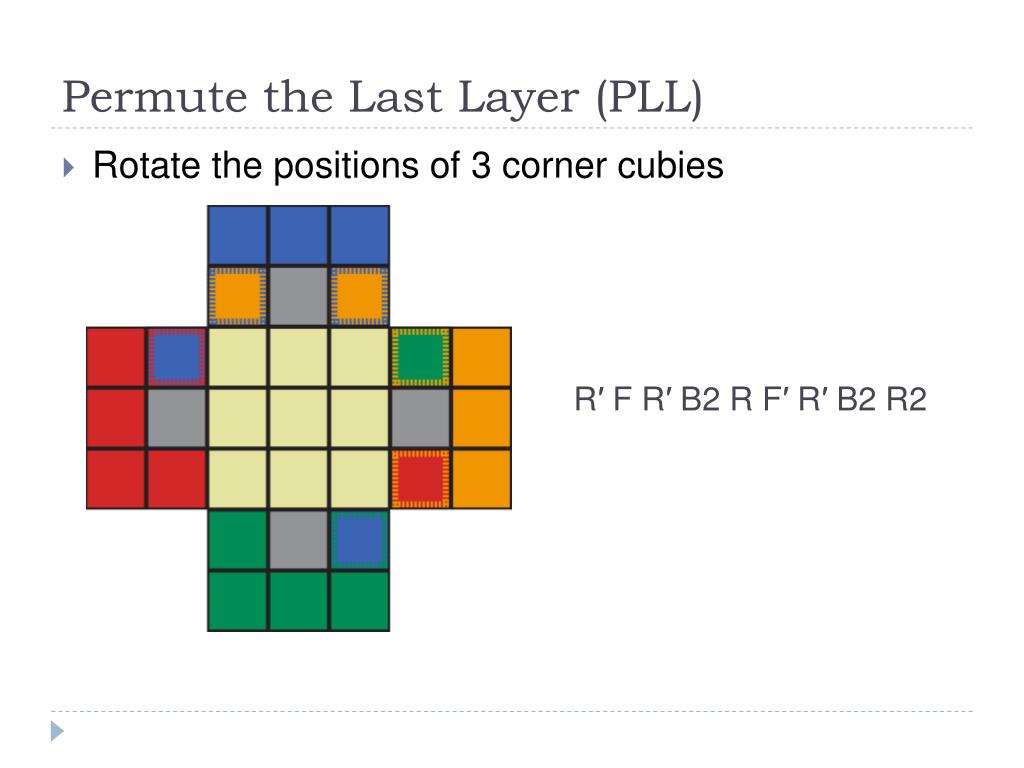

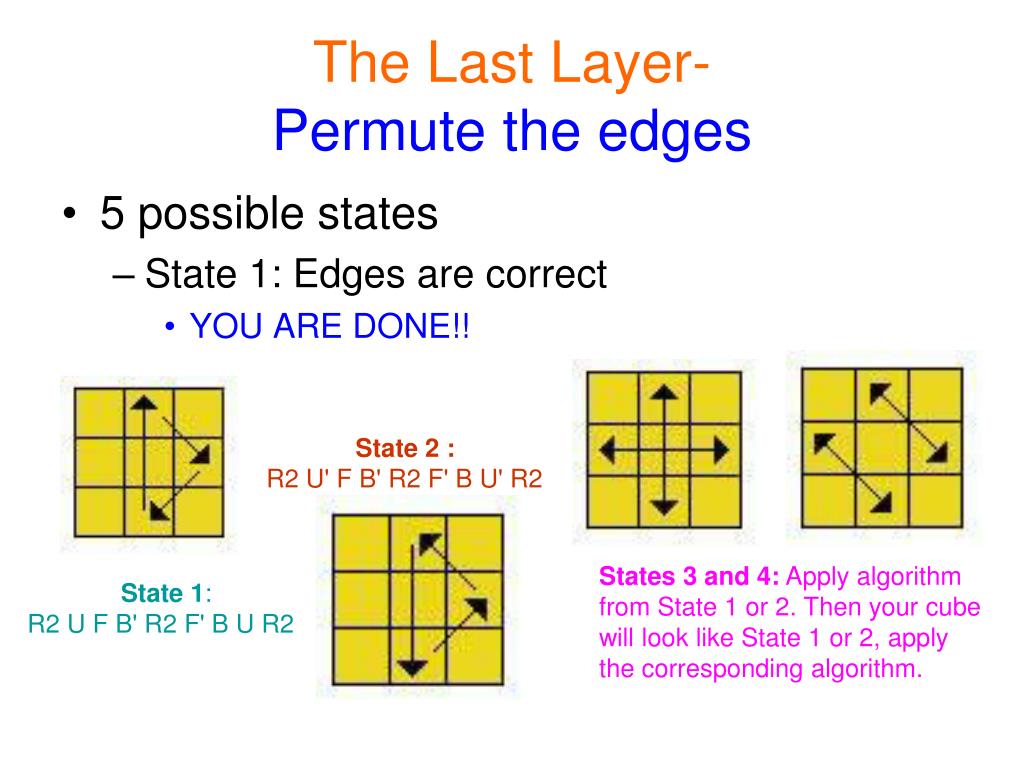

Cubing competitions are a whole community that people love. Compare that to today's World Record, Yusheng Du solved it in 3.47. Now about the World Records that have happened, there was a guy named Ronald Brinkmann and he solved it in 19 seconds in 1982. So that's CFOP the most advanced way to solve the cube. It includes many new features and the entire. Permute 3 was started from scratch - completely new project, everything written from the ground up again. F2l stands for First two layers, OLL stands for Orient Last Layer, and PLL stands for Permute last layer. Permute is the easiest to use media converter with its easy to use, no configuration, drag and drop interface, it will meet the needs to convert all your media files. All of those Acronyms stand for something. The most advanced one is called C.F.O.P which stands for Cross, F2L, OLL, and PLL. The one that I use is called Beginner method because like… im a beginner. There are multiple ways to solve the cube. The way to solve them is called algorithms, certain steps in order to get to the solved state. There is a certain way to solve the Rubik's Cube. It didn't take long until competition started to take place.

Permute last layer how to#

It took him almost 3 months to learn how to solve a Rubik's cube.

Permute last layer code#

This is also valid, because the whatever=img_rows x img_cols is equivalent to the pixel location dimension, and both the meanings of batch_dim and feat_dim are unchanged.The first ever Rubik's Cube was invented in 1974 and the community instantly started to figure out how to solve it.The cube was created by a person named Erno Rubik.At first he wanted to learn how to crack the code of the Rubik's cube. (Correct) reshape it to batch_dim x whatever x feat_dim.(Correct) permute axes (3,1,2), and this will lead you the tensor of shape batch_dim x feat_dim x img_rows x img_cols, while keeping the physical meaning of each axis.

(Wrong) reshape it to batch_dim x feat_dim x img_rows x img_cols, because the 2nd dimension is NOT the feature dimension and neither for the 3rd and 4th dimension.(Wrong) reshape it to whatever x feat_dim, because whatever dimension is meaningless in testing where the batch_size might be different.When you do any tensor reshape operation, ask yourself whether the physical meaning of an axis is changed in your expected way.įor example, if you have a tensor T of shape batch_dim x img_rows x img_cols x feat_dim, you can do many things and not all of them make sense (due to the problematic physical meaning of axes)

Therefore, it is a good habit to write down the physical meaning of each axis of a tensor. Which completely mess up the training even if it is under the ideal assumption. As a result, it leads to the following erroneous output after applying softmax, # cls#1 cls#2 This further makes the softmax operation meaningless, because it is supposed to apply to the class dimension, but this dimension does not physically exist. Whose shape is the same as the ground truth's, but fails to match the physical meaning of axes. In other words, a ground truth tensor will look like this # cls#1 cls#2Īssume you have a perfect network and generate the exact response for each pixel, but your solution will create a tensor like below # cls#1 cls#2 To see the difference assume all three pixels belong to the same class. Say you have 2 semantic classes and 3 pixels. However, matching the shape does not necessarily mean matching the physical meaning of axes. My guess is that you think L4's output dim matches L2's, and thus L4 is a short-cut that is equivalent to executing L1 and L2. Let's revisit the code that you want to change, which is reshape = Reshape((self.img_rows * self.img_cols, n_classes))(conv9) # L4 Therefore, this original code fulfills the final goal of semantic segmentation. the axis that n_class stands for, which is the semantic class axis.